The Truth Working Professionals and Lawyers Need to Hear

The Problem Isn't AI. It's Blind Trust.

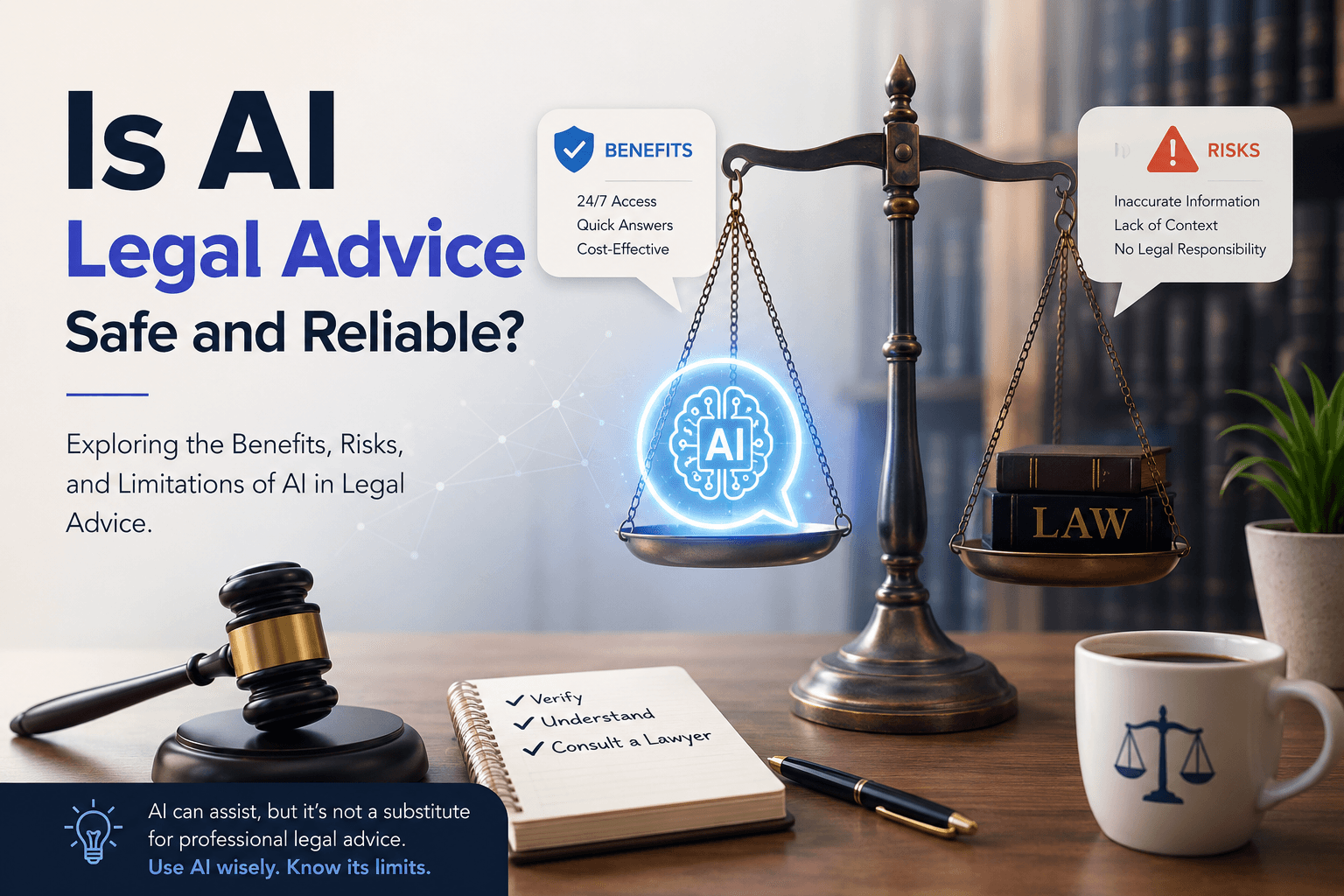

Let's be direct: the issue isn't that AI gives legal information. The issue is that people treat AI output as a final answer rather than a starting point.

Working professionals are busy. They want quick answers. So they type a vague question, get a confident-sounding response, and move forward without verifying a single word. No cross-checking. No context. No professional review.

Young lawyers are doing the same — feeding cases into AI tools, copying the output into briefs, and submitting them to courts without asking whether those citations actually exist.

Both groups are making the same mistake: forgetting that AI doesn't know your case. It knows patterns in text. That's a very different thing.

When AI Gets It Catastrophically Wrong: An Indian Case Study

This isn't a hypothetical risk. It's already happening in Indian courts — at scale.

In December 2024, the Bengaluru bench of the Income Tax Appellate Tribunal issued an order in the Buckeye Trust case — a ₹669 crore dispute. The order cited three Supreme Court judgments and one Madras High Court ruling. None of them existed. The tribunal had to recall its own order within a week after it emerged that ChatGPT had been used without verification.

Then, in October 2025, the Bombay High Court quashed a ₹27.91 crore income tax assessment after discovering that the National Faceless Assessment Centre — a government body — had relied on three completely non-existent judicial decisions.

The Court's observation deserves to be framed on every lawyer's wall:

"In this era of Artificial Intelligence, one tends to place much reliance on the results thrown open by the system. However, when one is exercising quasi-judicial functions, such results are not to be blindly relied upon."

If the government's own tax authority can fall for this — with trained legal staff — imagine what's happening when everyday professionals and first-year lawyers use AI with no oversight at all.

Who Is Most at Risk?

Two groups are particularly vulnerable, and for very different reasons.

Working professionals handling their own legal matters often turn to AI when they feel they have no choice — when a lawyer feels expensive, inaccessible, or unnecessary for what seems like a "simple" issue. They use AI to understand a notice, draft a reply, or figure out their rights. The problem is they act on that information without knowing what they don't know. By the time they realise something went wrong, they're sitting in a lawyer's office with a problem that could have been prevented weeks ago.

Young lawyers face a different trap. They know the law — but they over-rely on whatever AI produces. They use vague prompts, accept the output uncritically, and skip the verification step that any responsible legal professional must take. The result: fabricated citations, incorrect precedents, and in some cases, professional sanctions.

The common thread? Neither group pauses to ask: Is this actually correct? Have I given AI enough context to give me a useful answer?

AI Can Be Genuinely Useful — If You Use It Right

Here's what often gets lost in the debate: AI is a powerful legal tool when used properly.

It can help you understand complex legal language in plain terms. It can help you draft documents faster. It can give you a useful framework for thinking through a legal problem. It can reduce hours of preliminary research to minutes.

But it can only do this well if you meet it halfway.

The single most important thing you can do: Give AI full context before you ask it anything legal.

Don't type: "My landlord kicked me out. What can I do?"

Instead, tell AI exactly what happened — the timeline, what was agreed upon, where things went wrong, what your lease says, and honestly, where you might have been at fault too. The more specific you are, the more useful the response will be.

AI doesn't know what it doesn't know. If you give it half the story, it fills in the blanks — and those blanks can be dangerously wrong.

Use AI for reference. Draft with it. Understand concepts through it. But verify everything before you act on it.

Who Is Responsible When AI Gets It Wrong?

This is the question everyone wants to dodge — and the answer is uncomfortable.

No one can reasonably blame the AI company every time someone acts on incorrect legal output. The tools come with disclaimers. They are not lawyers. They do not know your jurisdiction, your specific facts, or the nuances of your situation.

The responsibility lies with the person who chose to act without verifying.

For lawyers, this is even clearer. You know the law. You have the tools to verify. Using AI without checking its output isn't a tech failure — it's a professional failure. The courts in India have made this explicit. Ignorance of how AI works is no longer a valid excuse.

For working professionals, it means understanding that AI can give you a map — but you still need to check whether the roads on that map actually exist before you start driving.

What About Regulation?

There is a growing conversation about whether India needs formal rules around AI use in legal practice. Kerala's High Court has already issued a policy for district courts. The Supreme Court has warned lawyers and judges. Calls for a Bar Council of India expert committee are getting louder.

But regulation will always lag behind technology. AI is developing faster than any legislature can keep up with. Making laws today would mean amending them tomorrow.

The more sustainable answer is user responsibility. Regulation can set the floor. But the standard of care — the actual quality of how AI gets used in legal practice — has to come from the people using it.

The One Thing to Remember

AI will not eliminate your work. It will accelerate it — if you let it. Read what it gives you. Think critically about it. Verify what matters. Use it to do more in less time, not to skip the thinking entirely. The professionals who will thrive in an AI-assisted legal world are not the ones who use AI the most. They're the ones who use it the most intelligently.